Philipp Wollermann is a Staff Software Engineer at Google. He was on the open-source team of Google's build system Bazel and worked on Bazel for over eight years.

At UnblockConf ‘21 Philipp shared the things the Bazel team learnt while implementing their own Bazel CI on top of Buildkite, and some Bazel tooling tips and best practices that will help speed up your own build pipelines.

What is Bazel?

Bazel is Google’s Open-source build system, created in 2015 to solve specific issues Google was tackling. Since then, it has been developed with the community and continues to grow in popularity. Bazel can provide considerably faster build times: it has the ability to recompile only the files that need to be recompiled and can skip re-running tests that haven’t changed.

About Bazel:

- It’s an open-source build and test tool created by Google and their community.

- It has a high-level, human-readable build language.

- It’s fast, reliable, hermetic, incremental, parallelized, and extensible.

- It supports multiple languages, platforms, and architectures.

What is Bazel CI?

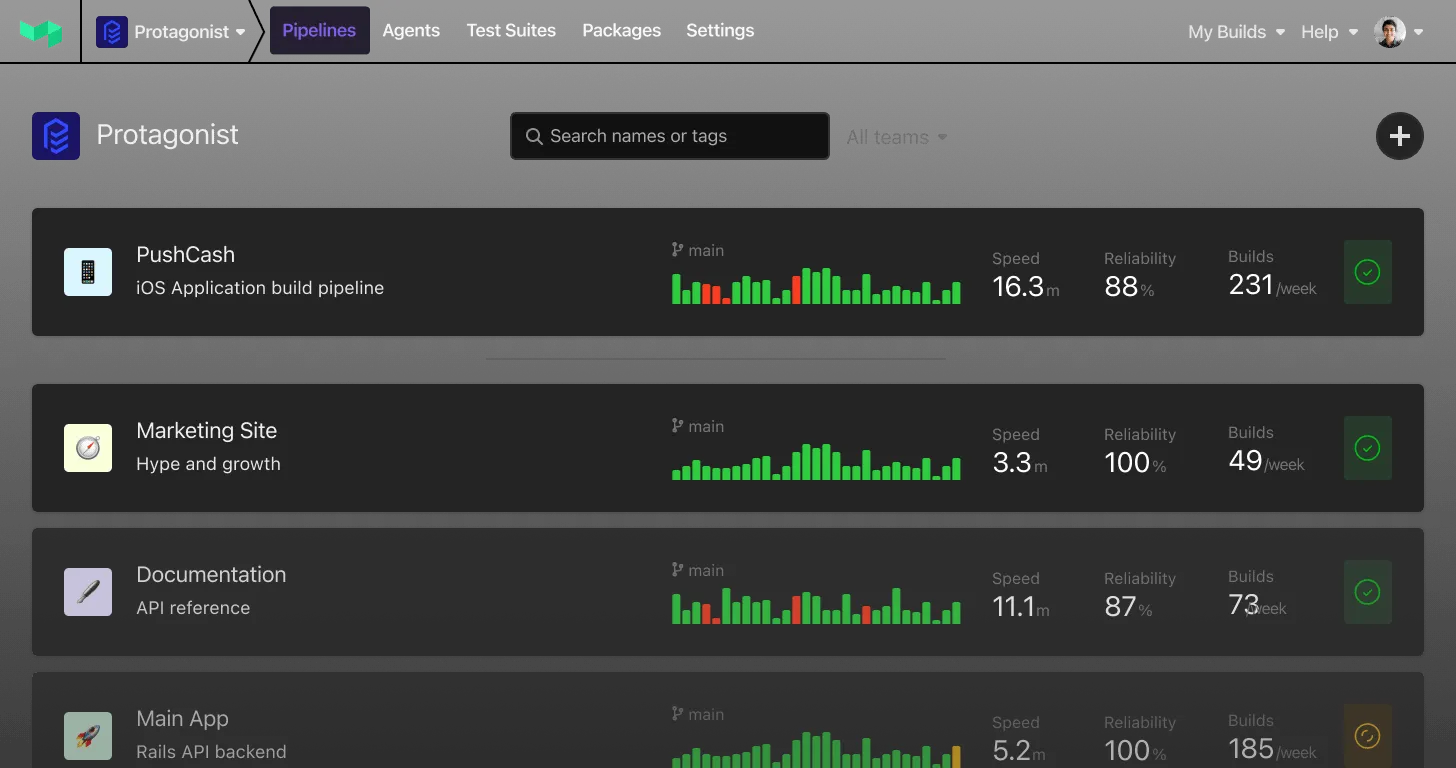

Bazel CI is Bazel’s custom CI/CD system for testing and releasing Bazel and its ecosystem. Bazel CI is built on top of Buildkite with some custom VCS integrations, a configuration DSL, and its own infrastructure, all written and maintained by the Bazel team. Bazel CI and Buildkite are used by the Bazel team for pre-submits, post-submit and downstream testing – basically testing Bazel against projects that use Bazel in order to minimise regressions with automatic culprit finding and to maximise stability when building and deploying Bazel releases.

Getting started

“The problems you encounter and the way you structure your CI setup highly depends on how your source code is structured.

Perhaps you have:

- a monorepo

- or a collection of project files

- or maybe just one big project file (called a

WORKSPACEfile in Bazel)- or you might be using the classic open-source approach of having a set of completely independent Git repositories, hosted on a remote source control manager such as GitHub or Bitbucket.

Getting started is relatively easy for all of these cases.

Philipp Wollermann

In most cases, starting out with Buildkite and Bazel is relatively easy, according to Philipp. You can:

- Create a Buildkite pipeline for your repository

- Write a YAML config file that runs

bazel build //src:bazelandbazel test --build_tests_only //... - You're done ✅

- Are we done? 😅

This approach works and performs quite well, but if you have a large monorepo, or large collection of repos it isn't likely to scale. The ... in //… is a wildcard which means all the tests in the repository will be run. You can use the build_tests_only flag to prevent building non-test targets during bazel test, but for huge repositories, it can still be slow, computationally intensive, or even fail completely.

So, once you've got Buildkite and Bazel working together, what happens when you hit the limits of what the initial implementation can offer? Let's take a look at some common problems and Philipp's suggested solutions.

Problem: Our Bazel CI pipeline takes > 1 hour to complete

Solution: Parallelisation and target sharding

“Time spent waiting for your build to complete is okay if it’s post-submit, but if you’re waiting for pull requests to build, developers get very uneasy waiting around for test feedback," says Philipp.

Combine Buildkite’s native sharding support with

bazel queryto turn one big job intonjobs that take roughly1/ntimePhilipp Wollermann

Bring down build times with:

- Buildkite's parallel jobs feature which:

- Runs the same job N times

- Assigns each parallel job an increasing index counter and environment variable

- Combine this with a script that:

- Replaces the

...wildcard inbazel test –build_tests_only // …into the full list of test targets - Shards this list of test targets into N buckets according to the parallel job index using

bazel query.

- Replaces the

Here’s an example Python script that Philipp extracted from the team’s Bazel CI logic that illustrates how to implement target sharding by combining Buildkite’s parallelisation with bazel query to significantly reduce build times.

Problem: Our monorepo is too big and `bazel test // …` doesn’t scale

Solution: Target skipping

It's true that Bazel is an incremental build system that has inbuilt caching and incrementality. Users should be able to rely on running bazel test //… to limit the scope of tests that are re-run to those relating to changes in a commit. According to Philipp,"this works, but eventually your repository might become so large that provisioning machines with enough RAM and CPU for Bazel to be able to work on the full dependency graph is too expensive, or even impossible".

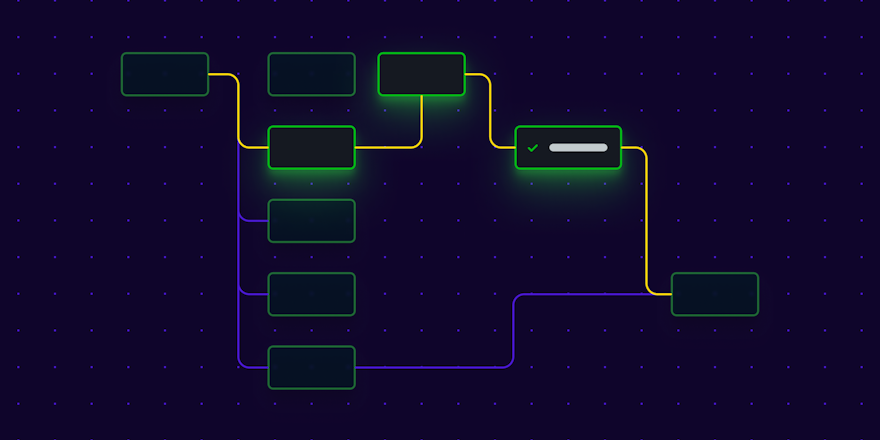

The answer then, is to calculate affected targets computationally. You can use path-based triggers that:

- Look for changes in the

git committriggering the job - Use this diff to decide which projects and targets to test

- See an example in Buildkite Feature request #243

The optimal solution is to calculate affected targets by using bazel query.

Helpful resources for getting started:

- BazelCon 2019 presentation - Selective Testing, Benjamin Peterson, Dropbox

- bazel-diff - A CLI tool Tinder created allowing users to identify the exact affected set of impacted targets between two Git revisions.

Target skipping means you’re not only optimising your builds, you’re also keeping your infrastructure and cloud-compute costs under control.

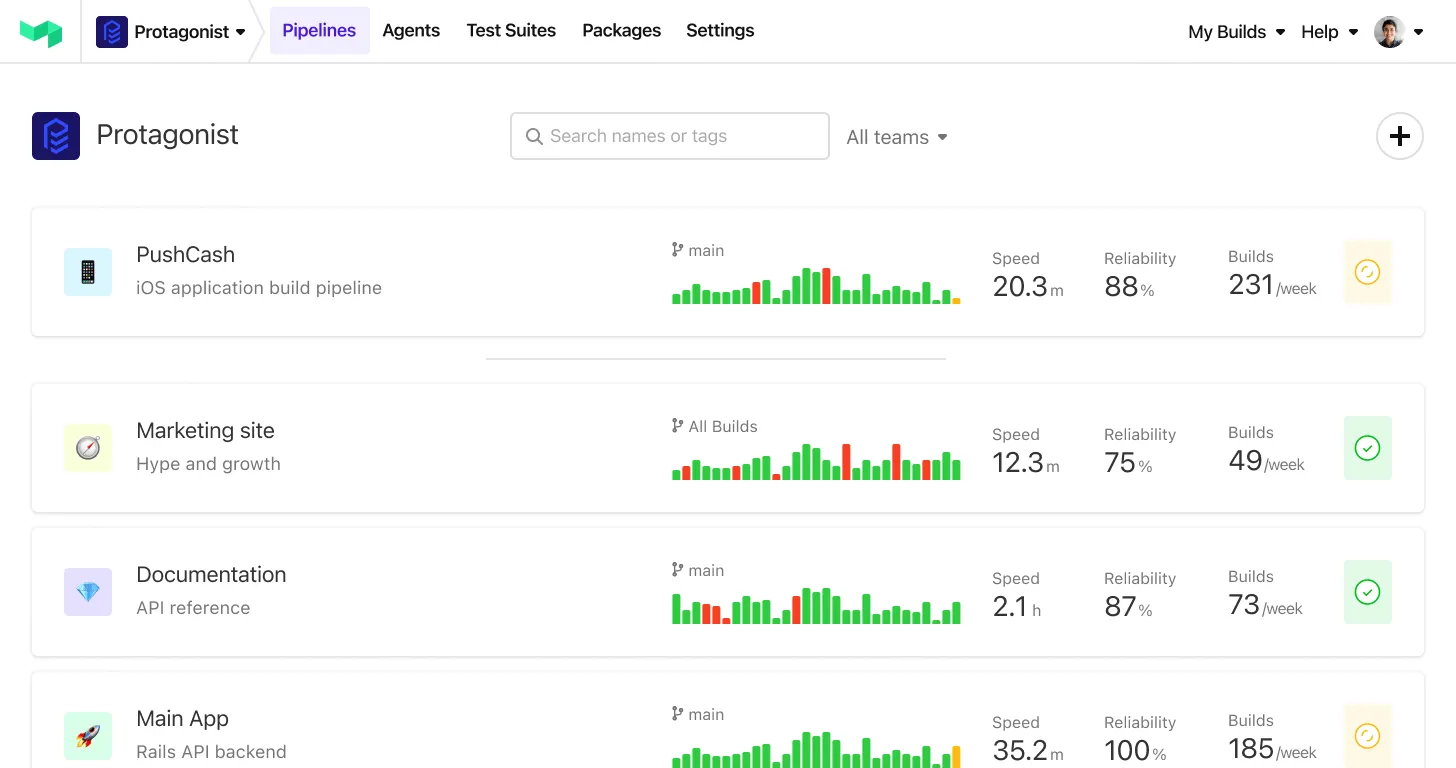

Problem: The single pipeline <-> monorepo mapping isn’t working for us anymore

Solution: Split it up into multiple pipelines

There are a number of reasons why you might want to split one big pipeline for your repository into multiple pipelines. You might be:

- Exceeding the number of maximum jobs allowed in one invocation of Buildkite

- Finding it difficult to browse build results

- Wanting individual build batches for individual projects

Philipp suggests applying the 1:1:1 rule:

- 1 team - ideally your Buildkite team is the same as the Github project team

- 1 project - per pipeline

- 1 pipeline - for the team to manage

It’s important to remember your monorepo’s webhook will continue to fire every time and as a result commits will often be irrelevant to the individual team, project and pipeline with this new architecture. Ideally you would implement target skipping to reduce the noise once you have re-architected your CI to have more granular pipelines.

Problem: Our `git clone` step is taking way too long

Solution: Provide workers with up-to-date copies of repos and checkouts

Inevitably as the size of your repository grows the git clone step in your pipeline will become increasingly slow and painful. Repeatedly downloading Bazel (and other utilities) unnecessarily utilizes network resources that could be better used elsewhere.

Philipp recommends one of these approaches to reduce the time spent waiting for a build to start:

Buildkite Agent git-mirrors (experimental feature):

- The agent automatically maintains a git mirror on disk of all repositories that your agent has tested

- For subsequent jobs that run on the agent, git can fetch objects directly from this mirror without needing to download them from the network

- Read about the

git-mirrorsfeature here

Stateful workers:

- Store the checkout on disk

- The mirror refreshes over time

Stateless workers:

- As workers only run a single job and then get replaced, using git-mirrors will not automatically help in this case

- However, you can pre-prime workers with a snapshot of all the repositories tested in CI:

- Use the Buildkite API to get a list of all Git repositories that are used by your pipelines

git clone --barethem all into the same directory structure that the agent'sgit-mirrorsfeature expects and update them e.g. daily viagit fetch- Upload a

tar.gzcopy of this directory structure somewhere and extract it into the right location when building your agent image - Result: When your stateless worker boots from the image, it will already have a reasonably up-to-date mirror of all relevant repositories and

buildkite-agentonly has togit fetchthe difference, saving a lot of network bandwidth and time - Some examples:

- How Bazel uses conditional Git clone logic –

gitbundle.sh - How Bazel pre-seeds their Git mirror -

docker.sh

- How Bazel uses conditional Git clone logic –

Problem: Lack of convention makes our CI difficult to understand and maintain

Solution: Developer-friendly abstraction via a custom high-level DSL

Initially the Bazel team had "a classic CI setup – most things were shell scripts, project owners had particular ideas how these shell scripts should be written, we ended up with 50 different ways to do roughly the same thing. The result meant our CI was very difficult to reason about and modify.”

Philipp Wollermann

The team wanted Bazel CI to have a high level abstraction that worked with Bazel’s primitives. To achieve this they designed a custom high-level DSL that projects on Bazel CI all use.

platforms:

# ...

windows:

build_targets:

- //...

test_targets:

- //...

# Windows doesn't have a `python3` executable on PATH.

- -//:py3_bazelisk_test

test_flags:

- --flaky_test_attempts=1

- --test_env=PATH

- --test_env=PROCESSOR_ARCHITECTURE

- --test_output=streamedA list of platforms can be passed in:

- macOS

- windows

- ubuntu2204

Each of the platforms can:

- Set the

build_targets - Set the

test_targetsand exclude particular tests if they don't need to be run on a particular platform - Set some customer flags to pass to Bazel

steps:

- command: |-

curl -fsS "https://raw.githubusercontent.com/bazelbuild/continuous-integration/master/buildkite/bazelci.py?$(date +%s)" -o bazelci.py

python3 bazelci.py project_pipeline --file_config=.bazelci/presubmit.yml --monitor_flaky_tests=true |

buildkite-agent pipeline upload

label: ":pipeline:"

agents:

- "queue=default"Pipelines have a single step configured in the Buildkite web UI that:

- Downloads the

bazelci.pyscript from the repository - Runs the

bazelci.pyscript which generates and uploads the rest of the jobs from the DSL programmatically:- Reads the Bazel CI YAML format

- Converts this to the YAML format Buildkite expects

- Then uses the

buildkite-agent uploadcommand

This DSL helped Bazel CI users a lot, transforming it “into a self service system that is very easy to modify and understand. Users no longer have to deal with the implementation details of how to do things in multiple platforms” instead having just one, high-level, consistent format for everything.

Problem: Rolling out changes for a large number of pipelines is painful

Solution: Eliminate repetitive manual tasks with infrastructure as code

“We made it so easy to create new pipelines (with our new DSL) that over time we accumulated a lot of pipelines. Eventually, we needed to update a plugin in the

bazelci.pyscript. It was then I realized I’d probably have to open a hundred Chrome tabs and make this change manually – I didn't see any other way.”Philipp Wollermann

Not a great way to maintain Buildkite at scale.

Fortunately there’s a better way to manage your Buildkite pipelines at scale! The Bazel team migrated their pipelines to the official Terraform Buildkite Provider, meaning:

- No more manual clicking around web UI

- Modifying Buildkite pipelines as code means you’re able to:

- Check changes into the repository

- See a clear diff of changes

- Make sure changes are safe before Applying

- All changes are version controlled and visible to all project team members.

You can check out the Bazel team’s Github repository - it contains all the Terraform configuration files used to manage their Buildkite pipelines.

Problem: Testing untrusted code in Buildkite can have potentially negative flow on effects

Solution: Stateless workers

With Buildkite, you’re in control of your own agent infrastructure, and this means deciding whether to use stateful or stateless workers.

Stateful workers give you the ability to cache things on disk, such as build system output and git clones, this improves performance and makes auto-scaling simpler. The downside is, it’s risky testing untrusted code on stateful workers, third party pull requests may result in unintended, negative side effects that may impact future builds. For this reason, wherever possible, Bazel CI uses stateless workers.

Stateless workers are configured to:

- Terminate the Buildkite agent after each job

- Have systemd shut down the VM if the Buildkite agent still exists

- Have the instance group manager detect when the machine has shut down

- Replace it with a completely fresh VM meaning no state is carried over between two jobs

Physical machines:

- Run a thorough cleanup step after each job

- Have trusted and untrusted machines are different networks

Philipp’s recommended approach to managing bare-metal stateless workers safely:

- Run your Buildkite agent as a completely separate user

- Use a monitoring script that watches the agent

- As soon as the agent exits after a job, perform some cleanups such as:

- Terminate all still running processes spawned by the job with

kill -9(or equivalent) - Restore the home directory of the buildkite-agent user from a fresh copy

- Clean up any temporary files that were created by the job.

- Terminate all still running processes spawned by the job with

Here’s an example of a clean-up script from Bazel CI for Buildkite Agents on macOS.

Problem: My stateful workers are doing too much work

Solution: Local caching

If you’re using stateful workers you can make use of Bazel’s local caching features; repository caching and disk caching. Bazel is incremental by default and should only do the minimum work required between two builds.

Repository caching:

- Keeps a copy of all downloaded external dependencies on disk and reuses them when a subsequent Bazel invocation needs them instead of downloading them again

- Is enabled by default

- Set the path manually with

bazel test --repository_cache=/home/buildkite/repocache

Disk caching:

- Is a local persistent cache for the results of build actions and tests

- The disk cache is hashed by the inputs that go into an action

- The hash is computed from various information that could possibly influence the results of the action, including:

- Input files (e.g. source code)

- Tools (e.g. compiler binaries)

- The full command-line of the action

- Environmental variables

- Disk caching is disabled by default

- Enable by running

bazel test --disk_cache=/home/buildkite/diskcache

Having access to these caches means Bazel can access the outputs of an action immediately without needing to re-run, even if it’s shut down between jobs or bazel clean is run.

Problem: I need to share the cache with multiple workers or stateless workers

Solution: Remote caching

Unfortunately, stateless workers are unable to utilize local caches (as the cache is lost when the machine gets replaced after each job), in this case a remote cache can be used. Bazel can query and upload cache entries for build outputs and test results to a shared remote cache, using HTTP WebDAV or a gRPC based API.

Philipp’s tips for remote caching

- Use bazel-remote:

- The Bazel team’s simple canonical cache server implementation written in Go

- It has smarts for what Bazel stores to keep the cache within limits

- Avoid cache poisoning:

- Cache poisoning occurs when platform-specific information that Bazel doesn’t track affects build outputs

- You can avoid it by including a cache key based on platform identifiers that you know might affect the build, but that Bazel can't reliably track on its own

Problem: Running Bazel builds and tests locally is too slow

Solution: Remote execution

By default, Bazel executes builds and tests on your local machine. Remote execution of a Bazel build allows you to distribute build and test actions across multiple machines, such as a datacenter. It also gives you an automatic persistent shared cache across all of your CI workers.

“Even if you have a nice 64-core workstation, you can only run 64 actions in parallel for integration tests, probably less because they tend to use a lot of resources. This is nice, but what's even nicer is to run thousands of actions in parallel - and with remote execution, you can make this work.”

Philipp Wollermann

Remote execution can be complex to set up but provides great benefits:

- Massively increased parallelism of actions (you can run all of your tests at once)

- An automatic, persistent, shared cache across all your CI workers

- CI worker machines can be cheaper, lower spec, because they no longer run actions locally

- More secure and hermetic, because actions can run in firewalled, isolated containers

- Reduced risk of cache poisoning, because the remote execution backend knows exactly what goes into each executed action and can track it

Some options to get you started with Bazel's remote execution feature:

- Set up one of the open-source backends on a cluster of machines (in no particular order):

- Implement your own custom backend for the API

- Work with an external expert or consultant to get set up

Problem: We don’t want to wait around for test logs when a test fails

Solution: bazelci-agent tool

Initially test logs were made available to users after the build was completed - using the Buildkite Agent’s artifact upload feature, but users wanted faster access to test failure logs.

Developers wanted faster feedback, asking “if I already know that a test is failing, why do I have to wait for the CI job to finish before I’m able to read it?”

Philipp Wollermann

In response to this very valid question, the team built the Bazel CI Agent tool.

The bazelci-agent tool:

- Listens to a Build Event Protocol (BEP) stream (events contain a build identifier, set of child event identifiers, and a payload)

- Identifies failing test logs in real time

- Uploads logs continuously using the

buildkite-agent artifact uploadcommand

Check out the team’s bazelci.py setup to see an example of the integration in use.

Problem: We have a problem with unreliable and flaky tests

Solution: Bazel’s automatic flaky detection and retry

Where there are tests, there are flaky tests – tests that might fail 20% of the time, for a reason that is often unclear.

Bazel has built in support for detecting flaky tests:

- Flaky tests are market as status

FLAKYin the output and the BEP - Bazel can automatically detect and retry

FLAKYtests- Specify the number of retries to attempt:

bazel test –flaky_test_attempts=3 //… - If a test fails, it will be retried up to the specified number of times. If it passes at least once, it will be marked as flaky in the output and the BEP and Bazel will consider the overall

bazel testinvocation to have passed

- Specify the number of retries to attempt:

- Bazel CI has a basic flaky test dashboard

- bazelci-agent checks for any tests with the status

FLAKY - If

FLAKYtest logs are found it uploads the BEP JSON for further processing to a GCS bucket - The dashboard regularly scans the bucket and shows flaky tests in a “high-score” list

- bazelci-agent checks for any tests with the status

“Of course, you’ll want to fix flaky tests because they are a pretty bad performance drag on your pipelines, they can cause the runtime to explode, and Bazel has to retry them multiple times.”

Philipp Wollermann

At Buildkite we know flaky tests can be a huge problem. That’s why we built Test Analytics – to help you identify and fix your flaky tests for good. ;)

You can watch Philipp's UnblockConf '21 presentation here: