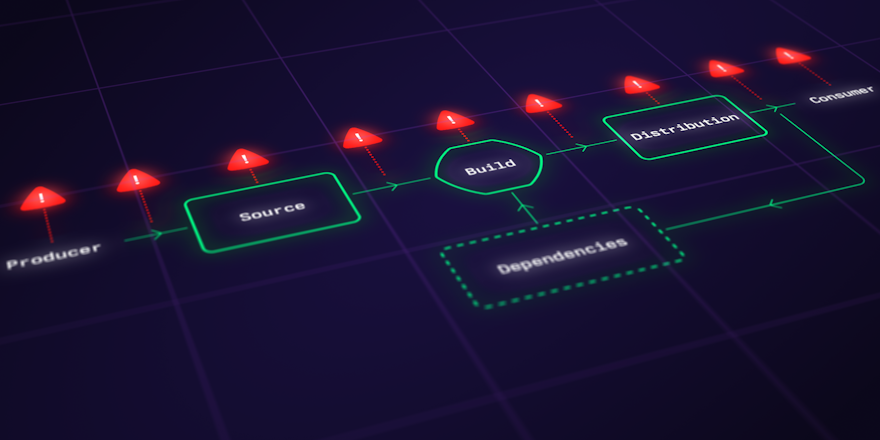

The way we create and deliver software has undergone significant transformations with the adoption of Continuous Integration and Continuous Deployment (CI/CD) practices. CI/CD significantly streamlines the software development process, enabling our teams to deliver high-quality software at a rapid pace with minimal resistance. As organizations accelerate their software delivery capabilities, how they manage the security of their software ecosystems must adapt. Continuous delivery coupled with complex environments and tooling means this can be challenging.

In this post, I’ll explore how security, compliance and governance can work in harmony with continuous integration and deployment, and help you consider, and strike the right balance between efficiency and security.

I’ve been thinking about this topic a lot, I’ve seen a lot of great talks about the topic, I’ve read about the lurking risks in CI/CD in OWASP’s Top 10 Security Risks in CI/CD and I’ve just stumbled upon a Charity Majors thread on the topic.

The way you build accountability into software is by designing it into the architecture of your CI/CD pipeline, and pairing on code reviews.

— Charity Majors (@mipsytipsy) August 27, 2023

It's in your checksums, your fingerprints, your static application security testing... your auditable, replayable software pipeline.

Onerous, once a year security audits were a thing that happened in a company I once worked at - they hadn’t always been a thing, but as our product became more successful more attention was focused on how we secured our software and systems, and Personal Identifiable Information (PII). Countless hours were spent pouring over numerous spreadsheets, with tasks farmed out to all the tech leads, loads of queries, and substantial manual effort.

On the flipside, I thought our engineering team's ways of working were as good as they got. We were nimble, collaborative, responsible for end-to-end design, testing, and delivery of features with pretty serious velocity. We were the happiest developers, we used the most modern testing and deployment processes – infrastructure as code, docker containers, autoscaling build agents, CI/CD, dashboards and monitoring with the right level of alerting. Yet, there was this undeniably huge gulf between how we built and delivered our software, and how we worked with our external security team, who were based at “head office” and who visited every now and then in their suits and ties, to scrutinize our way of working. YMMV, in fact it probably does - different teams work in different ways, and different engineering leaders interface differently with security teams. But to me, audits seemed annoying and any new role-based restrictions felt…restrictive. Our developer platform needed to adapt to support me doing my job safely and securely.

How secure are your systems?

Governments, health, and financial institutions have always been subject to strict compliance, auditing and governance requirements. They handle sensitive data and have been required to adhere to stringent regulatory requirements. Today though, most of our companies handle big data sets chock full of PII or at the very least, handle sensitive data. High profile security breaches feature in the headlines almost weekly, in 2021, over 22 billion records were exposed. Whenever security incidents occur, engineering teams are scrambling to limit the blast radius, and companies suffer damage to their brand and reputation, because it’s an ethical responsibility to protect customer data.

In March 2023, the Biden-Harris administration announced their National Cybersecurity Strategy, which shifts the responsibility for cybersecurity away from individuals and small businesses, toward entities responsible for personal data, such as software producers and vendors. They outline plans to establish liability for software products and services, and hold data stewards accountable for data breaches. This intends to reduce the burden on individual users and small organizations, and promote better cybersecurity practices and protection of sensitive data. Ethical obligations aside, with the increased likelihood of litigation and investigation, compliance and governance will be an integral part of the software delivery lifecycle.

The DORA 2022 Accelerate State of DevOps report detailed how organizations were tracking against the Supply Chain Levels for Software Artifacts (SLSA). The SLSA is a framework designed to help organizations improve the integrity of their software supply chains, assigning levels based on proper provenance.

Some SLSA best practices:

- Having centralized CI/CD

- Preserving immutable:

- build history

- dependency metadata

- Two person reviews and approvals of any code changes

- Having signed metadata

On where respondents were at with establishing these SLSA practices, the 2022 report stated that:

- 50% of respondents had completely established them

- 20-25% said they were underway (or were moderately established)

- 25% didn’t have them established at all

When asked about how they were tracking in their establishment of Secure Software Development Framework practices (SSDF):

- Under 20% had continuous code analysis/testing

- Just 15-20% agreed they effectively identified and addressed threats

- Less than 30% of respondents had security practices integrated as standard across projects in their development cycles

Secure Software Development practices

Given that very few software development lifecycle models explicitly address software security in detail, addressing and integrating Secure Software Development Framework (SSDF) practices in software delivery lifecycles is recommended. The results will be:

- A reduction in the number of vulnerabilities in released software.

- A reduction of the potential impact of the exploitation of undetected or unaddressed vulnerabilities.

- That you address the root causes of vulnerabilities to prevent recurrences.

The SSDF framework is a detailed list of practical, achievable practices to implement, that are broken up into four different categories:

- Prepare the organization

- Protect software

- Produce well-secured software

- Respond to vulnerabilities

Shift left security

The list of practices outlined in the SSDF are incredibly practical, and achievable. As with any framework or official regulations, they need to be adapted to your specific needs. Engineering leaders and security teams need to work together to decide on what is an acceptable risk tolerance, and define secure policies and practices to be integrated as standard across all project development lifecycles.

Developers are already under immense pressure to deliver – teams are leaner and distributed, and the software landscape grows increasingly more complex. Adding security to the to-be-aware-of list is a lot to ask. But given that security, compliance and governance measures are increasingly important to businesses, continuous compliance and governance will become integral to how we build and deliver software.

-

Continuous compliance ensures that the software meets the necessary regulations, policies, and security standards throughout its lifecycle, from development through to production.

-

Governance is about enforcing policies, security measures, and best practices in order to mitigate security risks and is necessary to ensure a secure end-to-end development process.

Shifting security left, and “DevSecOps” are the hot topics of the moment. Shift left security and DevSecOps is about bringing security and associated controls into the software delivery lifecycle. This allows security practices to keep pace with modern development best practices. We can automatically generate and store immutable metadata and logs, create and store mandatory compliance checks and governance measures as code in version controlled source-code repositories, and create developer platforms that streamline and document complex tooling, our dependencies and cloud infrastructure.

Compliance and Governance challenges

Traditionally security teams were siloed outside Engineering, DevOps and Platform teams. Auditing requirements were perceived as someone else’s problem, or “security theater”. And developers felt their delivery best practices were hampered by rigorous checks and controls, they tried to avoid or bypass things entirely in order to feel like they were still getting things done.

Here is a non-exhaustive list of challenges to consider and address when implementing compliance and governance in CI/CD.

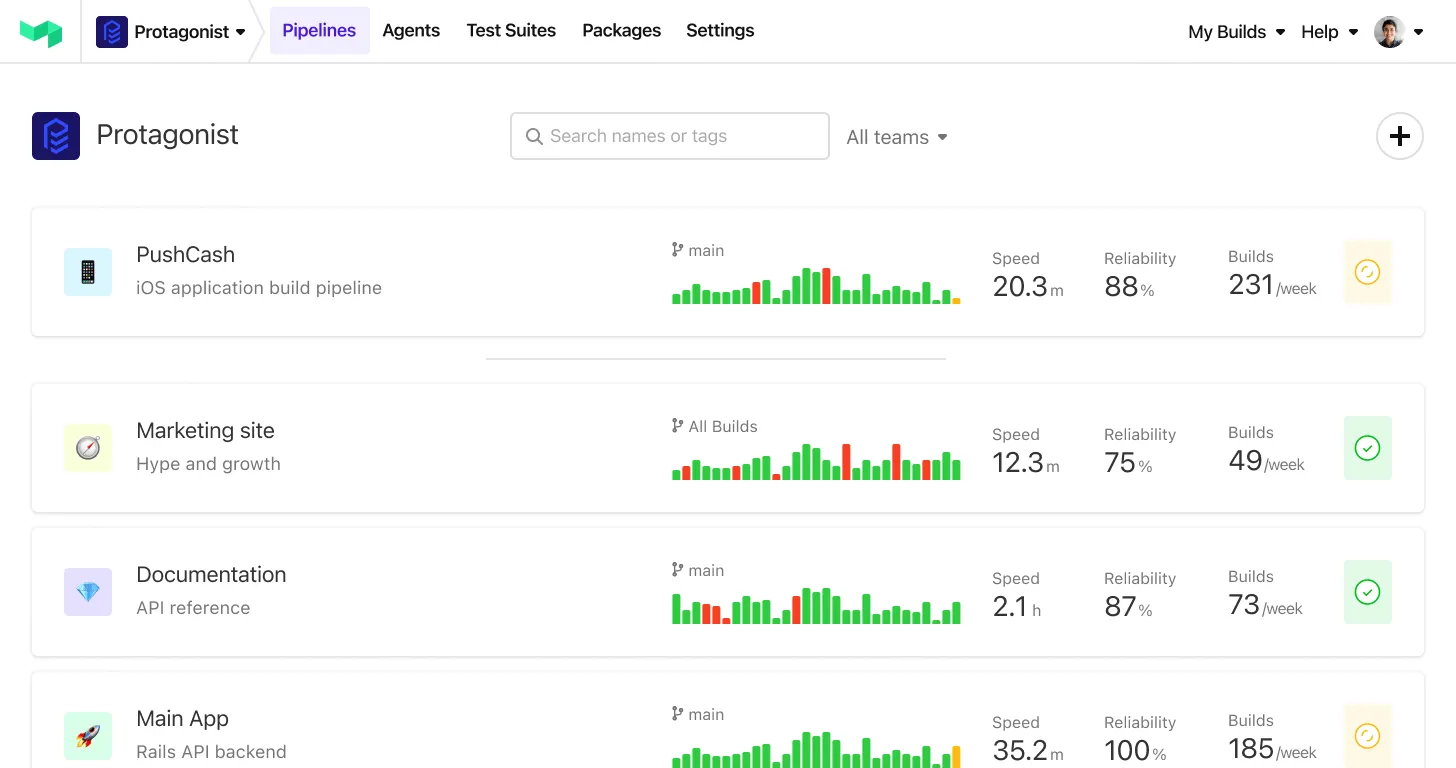

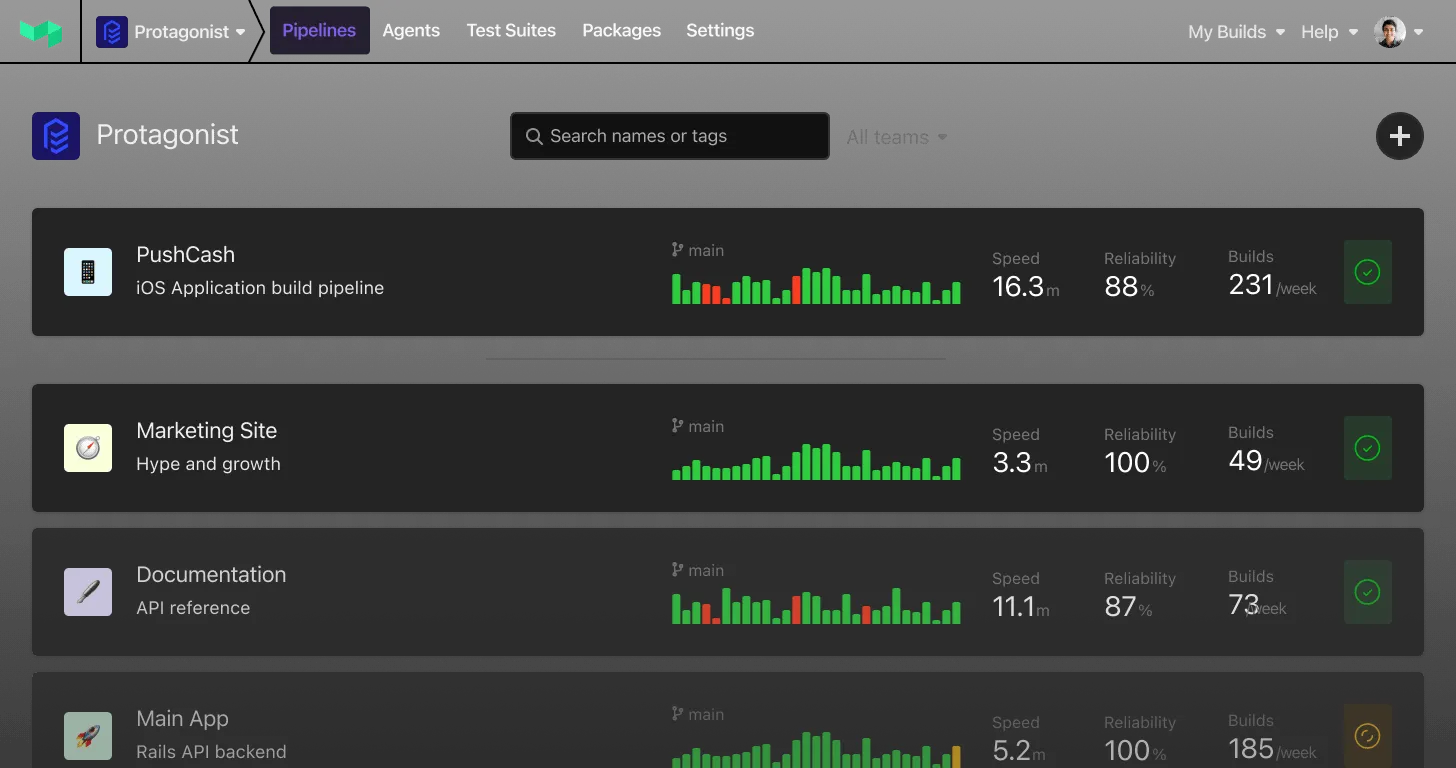

Speed vs. Security

CI/CD enables rapid development and release cycles, so thorough security reviews, manual blocks and approval processes can significantly slow things down. Ideally security measures, and compliance checks become a deliberate, yet frictionless, part of the software delivery lifecycle.

Fragmented Tooling

CI/CD pipelines involve numerous tools and services, leading to complex compliance and governance processes that can be difficult to manage cohesively.

Human error

With automation at the core of CI/CD, human errors can propagate swiftly and lead to compliance gaps if not monitored, and addressed promptly.

Open source contributions

CI/CD pipelines can be automatically triggered to run with code contributions from various locations (sometimes untrusted). This makes it difficult to assess the validity and security of code changes.

Managing roles and responsibilities

Applying the principle of least privilege to users and build systems can be difficult depending on your CI/CD platform

Automation isn't the silver bullet you think it is

Automation and tooling can solve a lot – but it doesn’t replace teamwork Engineering leaders need to work hard to foster trust, and build collaborative working relationships between developers and security teams. To better understand business risk, and design automated solutions to minimize them. We can automate all the security scans, reports, policies as code, identifying of vulnerabilities in our toolchains, but without developer buy-in, we’ll never effectively mitigate or respond to potential threats.

Security, governance and compliance strategies for CI/CD

Use version-controlled code-based configuration where possible

Define and manage pipelines, policies, and infrastructure as code:

- Grant privileged access to roles and policy definitions and create configurations (or modules) with them included so that developers can re-use securely

- Define and manage compliance requirements as code

- Version control these alongside application code

- Use a human readable format such as YAML so non-technical reviews and approvals can be done on actual, working compliance documents

- Adapt and deploy quickly as regulations evolve

- Have compliance regulations read-only for all non-privileged contributors

Generate and store immutable artifacts, audit trails, metadata and logs

- Utilize containerization or serverless architecture to create immutable artifacts that can be securely and consistently deployed.

- Tools and systems should be configured to generate immutable metadata and logs to be stored with highly privileged, read only access

Use standardized security practices and tools (don't reinvent the wheel)

- Securely handle secrets in CI/CD

- Use a dedicated secret manager such as HashiCorp Vault

- Implement real-time monitoring and auditing tools to track changes, detect anomalies, and ensure ongoing compliance across all environments.

- Automate security scans on code and it’s dependencies

- Use a dedicated tool such as Snyk

- Generate and store immutable a Software Bill of Material that records your software's dependencies.

- Use a dedicated package manager such as PackageCloud

- Sign git commits, including automatically generated commits with OIDC

Create secure boundaries for CI/CD, workflows and pipelines

- Centrally configure pipeline templates

- Create separate read and write roles for creating pipelines and editing settings

- Understand and limit who and what has access to secrets

- Restrict the scope of a pipeline’s access and permissions

- Use granular access controls such as job tokens and OIDC

- Have ingress/egress filters to the internet to restrict what requests and responses are allowed using:

- Create secure/insecure build contexts to limit potential risks associated with unsafe code, for example:

- Feature branch PRs/builds:

- Can run a smaller subset of steps i.e. linting, tests, security scanning

- Can’t access sensitive information

- Main branch PRs/builds:

- Runs an extended set of steps including deploy steps

- Can access sensitive information and other systems

- Feature branch PRs/builds:

Design human systems and processes for fixing issues and vulnerabilities

DevOps philosophy understands that tooling and culture are interrelated. We can automate all the things, but it's the human systems and processes that are far more critical to success than tooling alone can ever be. So ensure there are processes to triage, categorise and fix any vulnerabilities or security issues that are identified. Learn new things, and foster a culture that is engaged in creating secure software.

Conclusion

In general, engineers should NOT need to be constantl thinking about compliance -- only when setting up something new.

— Charity Majors (@mipsytipsy) August 27, 2023

Engineering productivity and performance, on the other hand, should always be on our minds. Entropy is *constantly* eating away at our efficiency.

CI/CD allows teams to innovate and deliver value more efficiently, code changes should be in production in minutes. Along with the efficiency of frictionless super-highways to production, lurk significant security risks. Fortunately, we can design seamless continuous compliance and governance measures as part of delivery and deployment process. Developers should be able to ship their code securely without delay, and companies should actively avoid being next week's big headline. It's up to us to strike the balance that we're all comfortable with.

Resources

- New Cybersecurity strategy shifts breach responsibility to Vendors, Software Providers

- DORA 2022 Accelerate State of DevOps report

- OWASP’s Top 10 Security Risks in CI/CD

- DevOps Audit Defense Toolkit

- Supply Chain Levels for Software Artifacts

- Secure Software Development Framework practices

- Best Practices for Terraform in CI/CD

- DevOps Automated Governance