If you are reading this, I assume you are a happy Buildkite customer or you just discovered Buildkite and you want to give it a try. I am a platform engineer at Elotl; we develop a nodeless infrastructure platform for microservices. Elotl’s Nodeless Kubernetes enables you to automate compute capacity management for workloads running on Kubernetes, including CI workloads like builds executed via Buildkite. One of the most awesome concepts why I value Buildkite more than other solutions in the CI/CD space is definitely the Buildkite agent. This is a binary, which takes care of many tedious tasks that normally you have to implement yourself, such as caching, artifact uploading etc. I really like the fact that it can run basically anywhere, on almost any kind of hosted OS.

The challenges of managing your own build infrastructure

If you decide to self-host a fleet of Buildkite agents, you will need to decide on an infrastructure platform where agents will run. Once the platform is ready and agents are running, you will probably need a dedicated person or team to maintain it, perform regular updates, implement monitoring, and ensure that the platform is not a bottleneck in your development workflow. To avoid it, you will have to ensure that waiting times for CI jobs are low; meaning that there is always a free agent to run your workload. This could be a tricky task, as it is usually hard to estimate the ideal number of agents, especially if your engineering teams are growing rapidly. It is easy to overshoot; this gives you low waiting times for an agent, but costs more. Usually this is a moment when people realize that they need to think about an auto scaling strategy.

The benefits of an autoscaling strategy

Autoscaling can provide a lot of benefits for your business as well as for engineering teams. An optimally configured autoscaler will help your organization reduce the cost of infrastructure; and when there is a burst of activity, the infrastructure can handle it without human intervention. For engineering teams it should provide a stable, fast, and reliable platform for CI/CD.

In many cases, Kubernetes is an optimal choice for orchestration of your workloads. It comes with first class support for rolling updates, healthchecks, automatic DNS / TLS / ingress rules, and so on. There are many ways to build Kubernetes clusters. Using a managed control plane greatly reduces the time and effort needed to set up and maintain the cluster, allowing developers to focus on the core mission of the team instead of spending most of their time with infrastructure operations tasks.

Scale Buildkite agents with Kubernetes

The following is a case study of a 1+ year live deployment at an enterprise customer in the finance vertical. We used Amazon Elastic Kubernetes Service (EKS) as the default Kubernetes control plane. With EKS, the control plane runs across multiple AWS availability zones, resulting in high availability and eliminating a single point of failure. AWS takes care of patching it and ensuring that it is up to date. EKS is also very well integrated with various AWS services, such as Elastic Load Balancing, IAM for authentication, KMS for encryption, etc.

Once we have a Kubernetes cluster ready, we may start thinking about autoscaling. We have two business goals here:

- Ensure that the company is efficiently spending on infrastructure.

- Ensure that the developer experience is good, meaning that the CI/CD platform is fast and reliable.

As a developer, you want to:

- Ensure that there are enough buildkite-agent pods to satisfy current CI/CD traffic.

- Ensure that idle buildkite-agent pods are scaled in.

Those two engineering goals can be accomplished by using Kubernetes’ built-in Horizontal Pod Autoscaler.

Your engineering team will be happy. But, this is not enough.

Why?

First of all, we failed to meet our business goal; we are not saving money. Those pods still run on a fixed number of Kubernetes Nodes, which are simply EC2 instances. And those instances are running 24/7, so you are still paying for all idle EC2 instances.

Another issue is that if your CI/CD traffic increases in the foreseeable future (e.g., because you hired more engineers), your infrastructure cost will increase and it is likely that wait times will increase as well. If your organization measures time-to-deploy (TTD) and time-to-feedback (TTF) you will probably see those numbers increasing.

Introducing Buildscaler

We (Elotl) help Buildkite customers address those issues. With Elotl’s Nodeless Kubernetes, there is no need to provision and manage individual servers or maintain a large worker node pool for applications: instances running pods are provisioned on the fly to match application resource requirements, using a 1:1 pod-instance mapping. This design also enables application isolation, improving security, and eliminates the noisy neighbor problem: each pod is isolated via its own EC2 instance that is not shared with other pods. It could also provide significant cost savings, as we support EC2 Spot Instances, which could be up to 90% cheaper than on-demand ones. We also support Fargate as a compute launch type; Fargate is AWS’ Container as a Service offering, and Elotl’s Nodeless Kuberentes eases using it with Kubernetes as orchestrator.

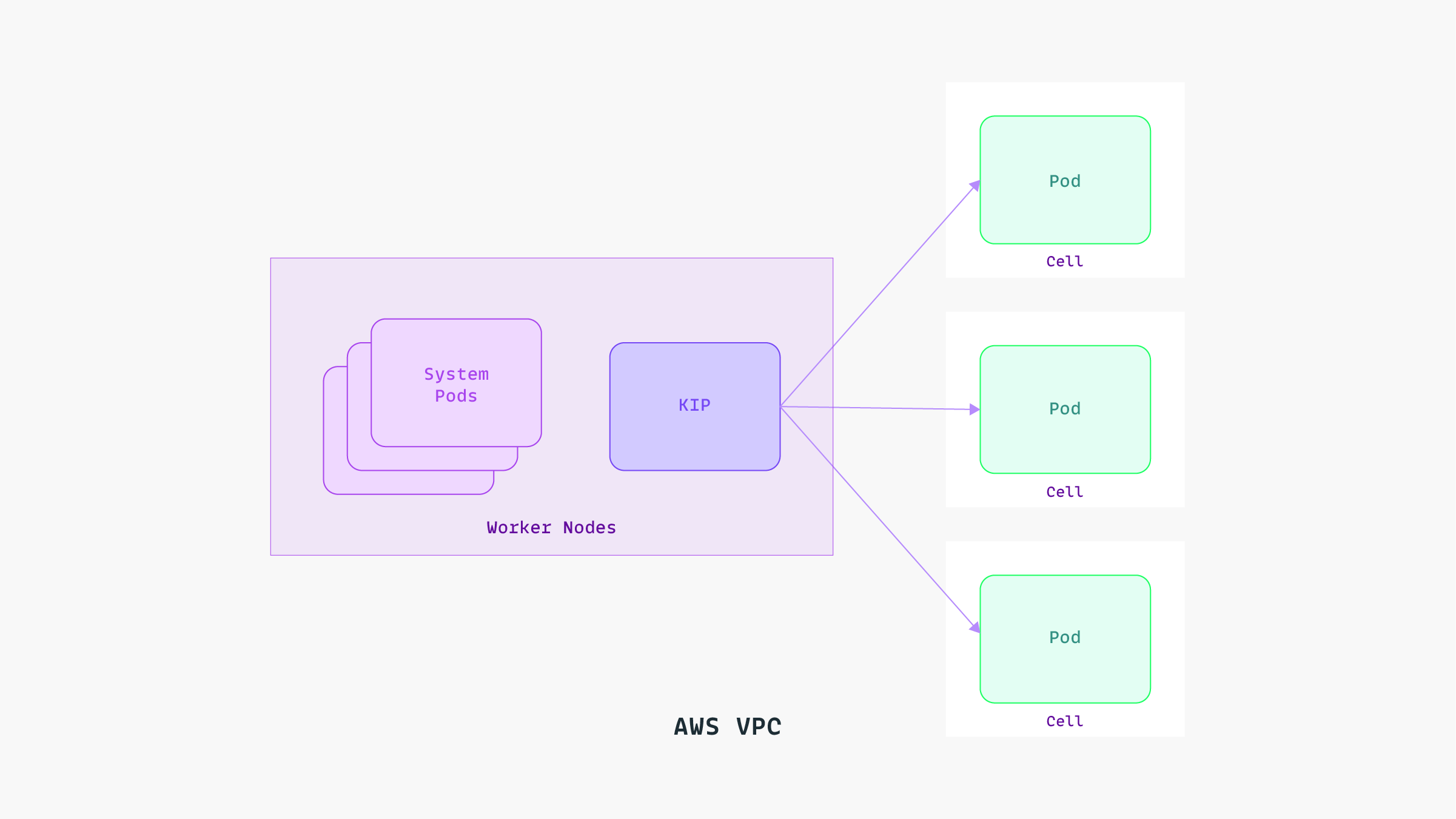

To run Nodeless Kubernetes components and a few other system pods, a small pool of worker nodes is provisioned across multiple availability zones.

To implement autoscaling, we created Buildscaler - a Kubernetes controller that autoscales a fleet of Buildkite agent pods on the cluster. The agent pods will scale up to run waiting builds and gracefully scale down when agents are idle. Since the controller gets its input from the Buildkite REST API (available pipelines, number of builds waiting, etc.), it can make informed decisions on what scaling operations need to be performed.

For scaling up, new agent pods will be created in Kubernetes based on a pod template. Each Buildkite pipeline is configured with a queue tag, and pod templates are annotated with the name of the queue. Buildscaler automatically discovers available pod templates, and uses the right one matching the queue and pipeline that needs an agent.

It is worth mentioning that Buildscaler’s single responsibility is scaling in and out buildkite-agent pods while Nodeless Kubernetes is responsible for scaling infrastructure. This makes Buildscaler interoperable with any other autoscaling solution in the Kubernetes ecosystem.

Another Elotl customer, Flare.build, uses Buildscaler with Cluster Autoscaler in Google Kubernetes Engine. Here’s what Zach Gray, Flare.build’s CEO, had to say about the results:

“At Flare.build, we needed an autoscaling solution for Buildkite agents on GKE and EKS as part of our fully-managed Buildkite and Bazel offering. Buildscaler helps us scale Buildkite agents and underlying infrastructure automatically based on pending build jobs, thereby simplifying operations; this allows us to effectively and efficiently manage thousands of CI machines for hundreds of customers with a small team.”

- Zach Gray, CEO, Flare.build

Summary

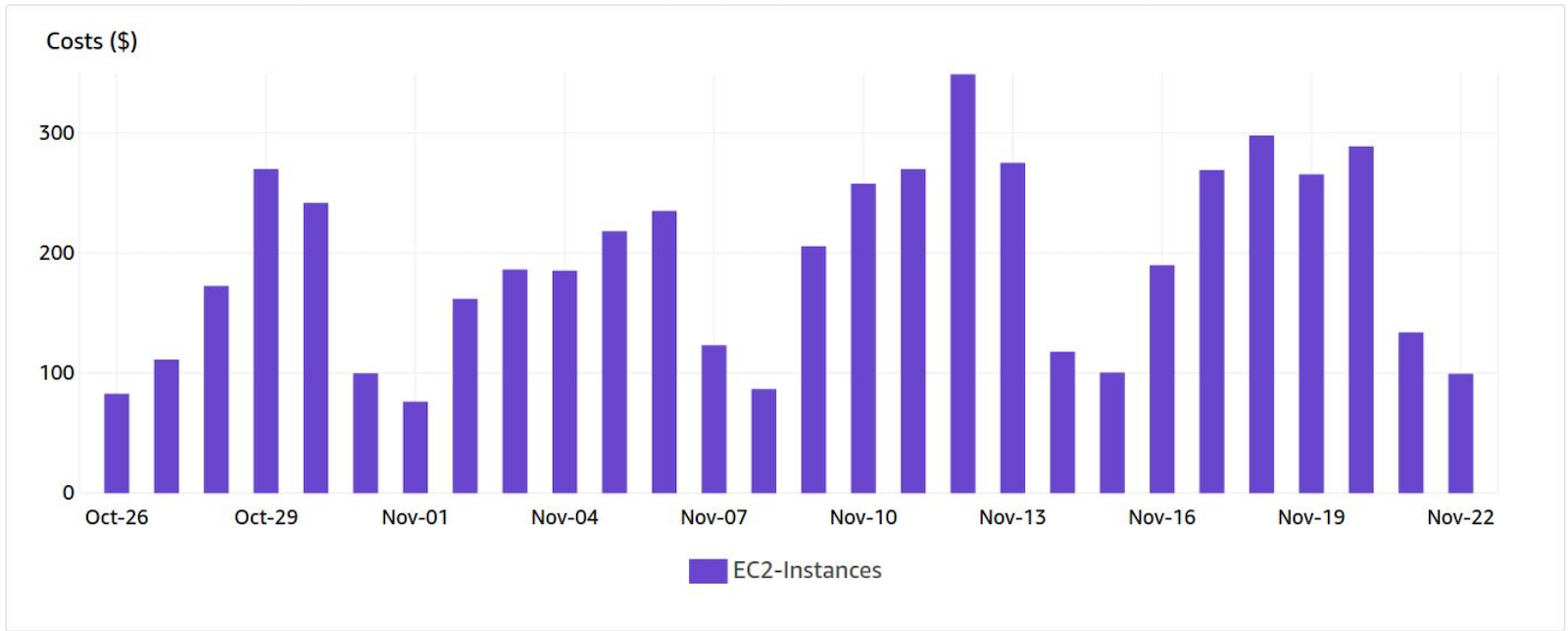

The combination of Buildscaler and Nodeless Kubernetes will let you benefit from significant cost savings (because the underlying EC2 instance will run only as long as it is needed) without sacrificing developer productivity, while at the same time the CI wait times will stay low despite the lesser number of nodes. It is a solution that lets us accomplish the business and engineering goals we specified at the beginning of the article.

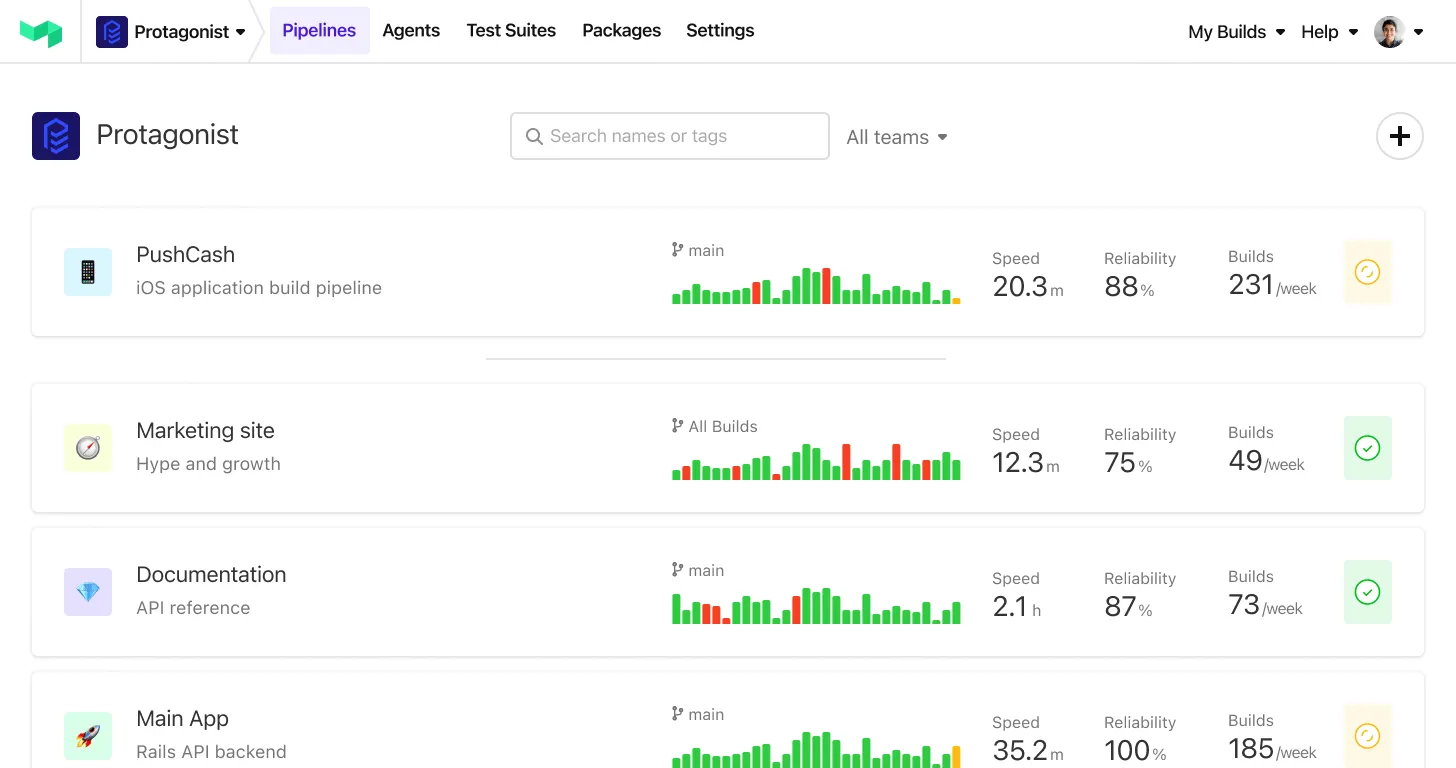

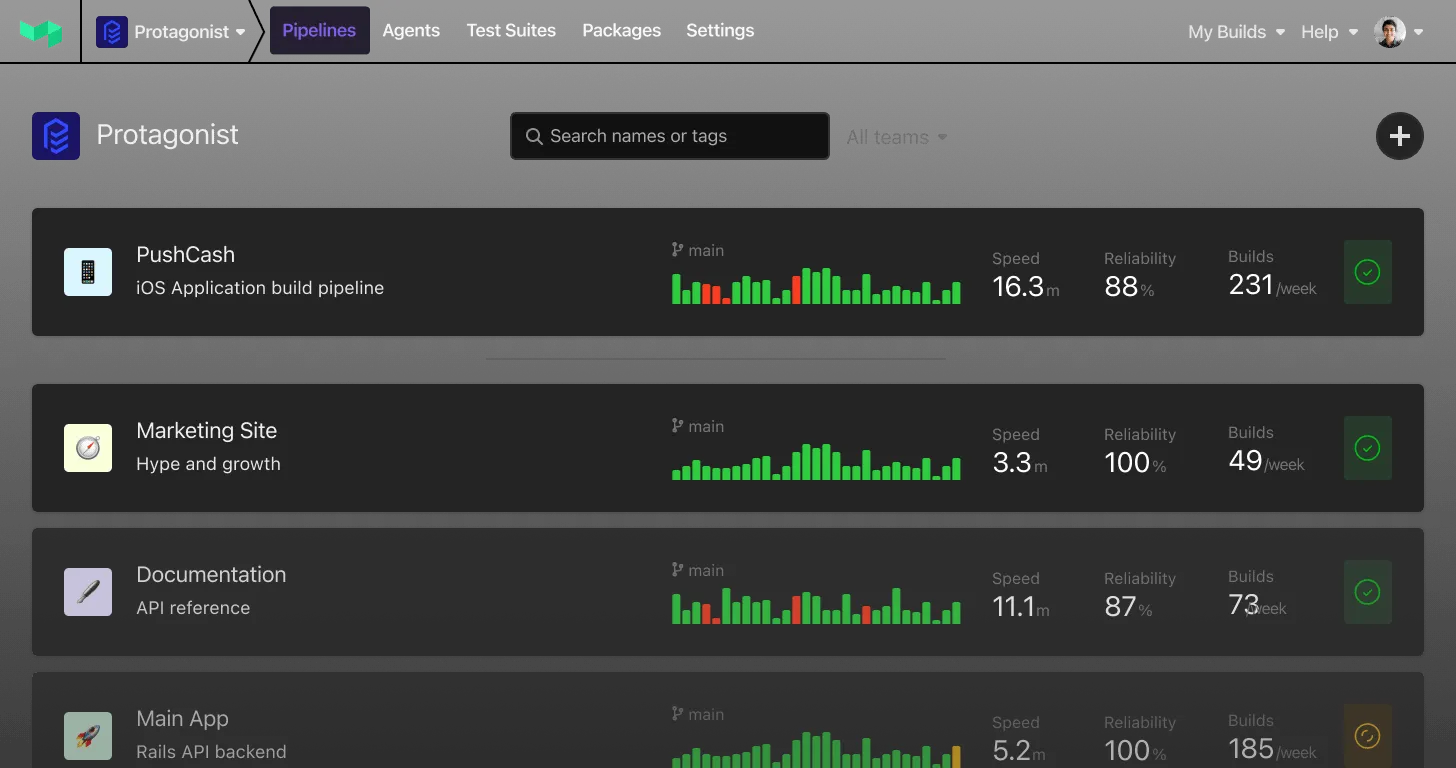

As you can see on the dashboard below, the number of running EC2 instances drops to low levels during the weekends, as there is usually no CI/CD traffic on those days.

Nodeless Kubernetes in Action

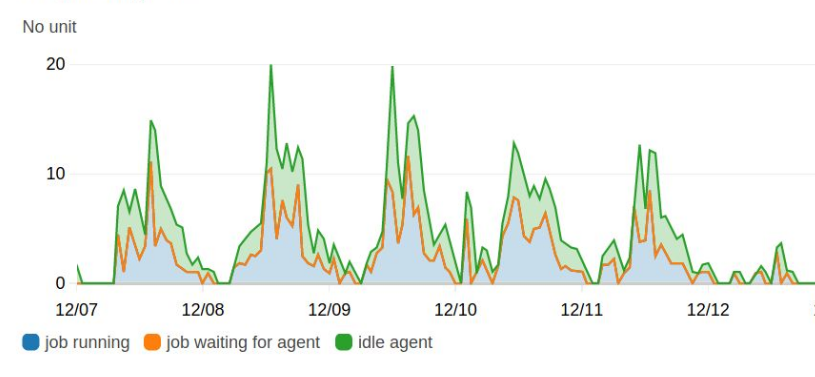

In Buildscaler, you can also configure headroom for your agent pools, so there is always an idle agent to run your builds; this helps to reduce wait times as shown on the graph below.

To learn more about Buildscaler and/or try it out, please reach out to Elotl at info@elotl.co or Buildkite at hello@buildkite.com.